TEDx

Intuitive interfaces make all the difference when dealing with interactivity. If someone can't quickly, subconsciously grasp how to manipulate the functions of a piece, it's useless. How can we use such a fun interface to bring down the barrier between participant and performer?

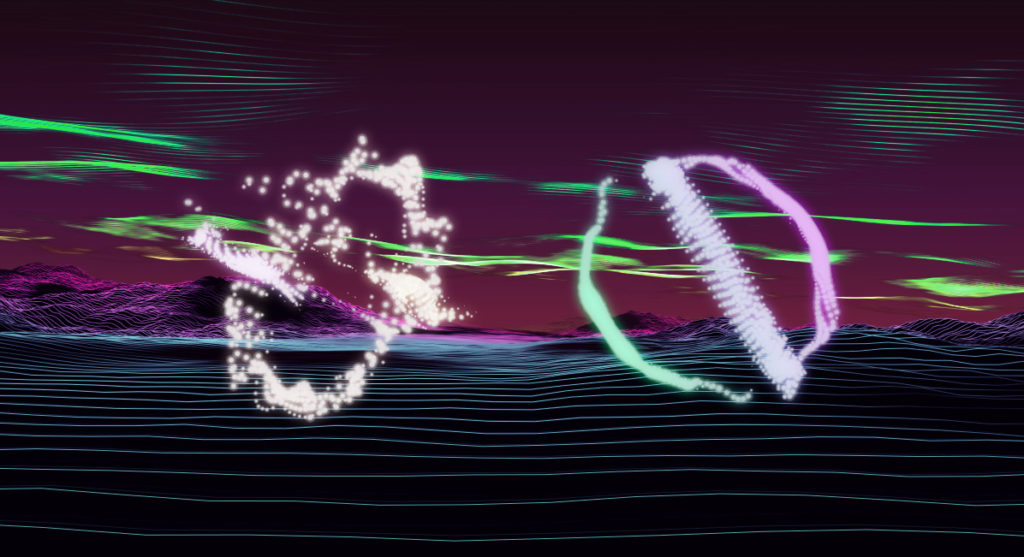

Using Max/MSP, Ableton Live, & Touch Designer, we took wireless, USB Guitar Hero Controllers and turned them into an intuitive interface for manipulating audio-visual events during a performance by Matt Davis and Peter Sistrom on 04.12.2011.

We accessed the trigger buttons, gyroscopic accelerometer, and whammy bar so participants could play samples, manipulate them with effects, and move their visual counterparts around by twisting the controllers.

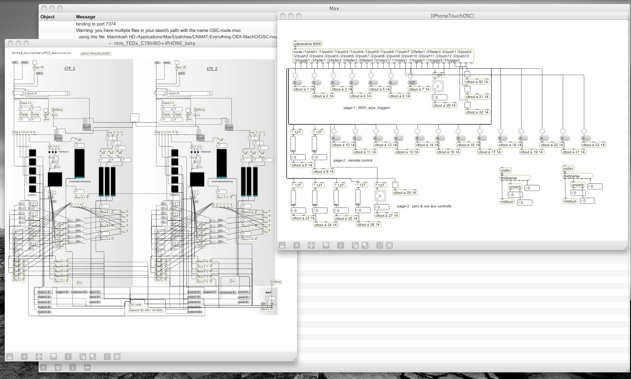

Here’s a look at the Max/MSP control system. It takes USB input from the controllers, as well as OSC from Matt’s iPhone, and converts the data to MIDI: